A Unified NLP Framework for LLMs.

Terms of Use

The omnes-flores Python module is published under Apache License Version 2.0 and the dedicated models for omnes-flores are distributed under the license inherited from the Universal Dependencies treebanks used for training.

To use the base model google/gemma-2-9b, you must agree to the terms of use in your HuggingFace account.

To access Gemma on Hugging Face, you’re required to review and agree to Google’s usage license. To do this, please ensure you’re logged in to Hugging Face and click below. Requests are processed immediately.

Requirements

The omnes-flores technology preview requires a Linux environment with NVIDIA GPUs (Ampere or later).

To run inference on the 9B parameter base model + LoRA using bfloat16, 24GB or more of GPU memory is required.

The following environments have been tested for operation.

- NVIDIA RTX Pro 6000 Blackwell 96GB

- CPU RAM 64GB

- Ubuntu 24.04

- CUDA 12.8

- Python 3.12

- vLLM 0.16.0

- Transformers 4.57.6

We are planning to support Apple Silicon (MLX) in the near future.

Install

Installing the library is very simple like:

$ python3 -m venv venv

$ source venv/bin/activate

$ pip install omnes-flores

Setup HuggingFace

To use the base model google/gemma-2-9b, you have to agree to the terms of use by following the steps below:

- Log in to HuggingFace with your huggingface account.

- Open

google/gemma-2-9bpage. - Read the descriptions in

Access Gemma on Hugging Facepanel and proceed toAcknowledge licenseif you agree to the contract.

Next, generate your access token in huggingface account settings page:

- Open

Access Tokenspage and then push+ Create new tokenbutton. - In the

User permissions (your-account-name)section ofCreate new Access Tokenpage:- Select

Fine-grainedinToken typefield (default). - Fill

read-gated-reposin theToken namefield. - Check the following items in

Repositoriessection.Read access to contents of all repos under your personal namespaceView access requests for all gated repos under your personal namespaceRead access to contents of all public gated repos you can access

- Push the bottom side

Create tokenbutton. - In the

Save your Access Tokendialog, copy the access token beginning withhf_by pushing theCopybutton and then save it in appropriate secure place.

- Select

Finally, login via CLI with an access token, like:

- From the Python environment which you installed

omnes-flores, executehf auth loginand paste the access token.- If the login is successful, the following will be displayed:

$ hf auth login ... Enter your token (input will not be visible): ... Login successful. The current active token is: `read-gated-repos`

- If the login is successful, the following will be displayed:

Run Models

40-lang-41-treebank-v0 (CC BY-SA 4.0)

This model is available for commercial use.

$ omnes-flores < text_file > conllu_file

In the above code, the input text_file is plain text regardless of language, and sentence separation by line breaks is not required.

To improve the inference efficiency, the input is automatically batched, but batch is always separated by blank lines in the input.

When processing interactively via standard input, you can press Enter twice to get immediate inference.

For the details of output CoNLL-U format, read the Universal Dependencies official page.

This model was trained using training data from 40 UD languages, consisting of 41 treebanks.

The Japanese word unit is LUW.

(日本語の単語分割基準は国語研長単位です。)

The following 40 UD treebanks, which have both a commercially available license and over 40k UD tokens in the train set, were selected to train the LoRA models of omnes-flores-40-lang-41-treebank-v0.

- UD_Armenian-ArmTDP, UD_Belarusian-HSE, UD_Bororo-BDT, UD_Chinese-GSD, UD_Chinese-GSDSimp, UD_Croatian-SET, UD_Czech-CAC, UD_Danish-DDT, UD_Dutch-Alpino, UD_English-EWT, UD_Estonian-EWT, UD_Finnish-TDT, UD_French-GSD, UD_German-GSD, UD_Haitian_Creole-Adolphe, UD_Hebrew-IAHLTwiki, UD_Icelandic-GC, UD_Indonesian-GSD, UD_Irish-IDT, UD_Japanese-GSDLUW, UD_Korean-Kaist, UD_Latvian-LVTB, UD_Lithuanian-ALKSNIS, UD_Naija-NSC, UD_Norwegian-Nynorsk, UD_Persian-PerDT, UD_Portuguese-Porttinari, UD_Romanian-RRT, UD_Russian-GSD, UD_Scottish_Gaelic-ARCOSG, UD_Serbian-SET, UD_Sindhi-Isra, UD_Slovak-SNK, UD_Slovenian-SSJ, UD_Spanish-GSD, UD_Swedish-Talbanken, UD_Thai-TUD, UD_Turkish-BOUN, UD_Ukrainian-ParlaMint, UD_Western_Armenian-ArmTDP,

In addition, a proprietary treebank was used for training, which were specially licensed from the National Institute for Japanese Language and Linguistics exclusively for training this model.

- UD_Japanese-BCCWJLUW (excluding PN newspaper articles)

40-lang-42-treebank-v0 (CC BY-SA 4.0)

This model is available for commercial use.

$ omnes-flores --m megagonlabs/omnes-flores-40-lang-42-treebank-v0 < text_file > conllu_file

This model uses the Corpus of Everyday Japanese Conversation (CEJC) as part of training data, and uses SUW as the Japanese word unit in order to handle non-sentence contexts contained in fragmented speech.

(本モデルは訓練データの一部に日本語日常会話コーパスを使用しており、日常会話の断片的な発話に含まれる非文法的な文脈に対応するために、日本語の単語分割基準には文節構造を前提としない国語研短単位を用いています。)

The following 40 UD treebanks, which have both a commercially available license and over 40k UD tokens in the train set, were selected to train the LoRA models of omnes-flores-40-lang-42-treebank-v0.

- UD_Armenian-ArmTDP, UD_Belarusian-HSE, UD_Bororo-BDT, UD_Chinese-GSD, UD_Chinese-GSDSimp, UD_Croatian-SET, UD_Czech-CAC, UD_Danish-DDT, UD_Dutch-Alpino, UD_English-EWT, UD_Estonian-EWT, UD_Finnish-TDT, UD_French-GSD, UD_German-GSD, UD_Haitian_Creole-Adolphe, UD_Hebrew-IAHLTwiki, UD_Icelandic-GC, UD_Indonesian-GSD, UD_Irish-IDT, UD_Japanese-GSDLUW, UD_Korean-Kaist, UD_Latvian-LVTB, UD_Lithuanian-ALKSNIS, UD_Naija-NSC, UD_Norwegian-Nynorsk, UD_Persian-PerDT, UD_Portuguese-Porttinari, UD_Romanian-RRT, UD_Russian-GSD, UD_Scottish_Gaelic-ARCOSG, UD_Serbian-SET, UD_Sindhi-Isra, UD_Slovak-SNK, UD_Slovenian-SSJ, UD_Spanish-GSD, UD_Swedish-Talbanken, UD_Thai-TUD, UD_Turkish-BOUN, UD_Ukrainian-ParlaMint, UD_Western_Armenian-ArmTDP,

In addition, the following datasets were used for training, which were specially licensed from the National Institute for Japanese Language and Linguistics exclusively for training this model.

- UD_Japanese-BCCWJ (excluding PN newspaper articles)

- UD_Japanese-CEJC

84-lang-99-treebank-non-commercial-v0 (CC BY-NC-SA 4.0)

This model is made available for non-commercial use, including academic use; commercial use is strictly prohibited.

$ omnes-flores --m megagonlabs/omnes-flores-84-lang-99-treebank-non-commercial-v0 < text_file > conllu_file

The Japanese word unit is LUW.

(日本語の単語分割基準は国語研長単位です。)

The following 40 UD treebanks, which have both a commercially available license and over 40k UD tokens in the train set, were selected to train the LoRA models of omnes-flores-84-lang-99-treebank-non-commercial-v0.

- UD_Armenian-ArmTDP UD_Belarusian-HSE UD_Bororo-BDT UD_Chinese-GSD UD_Chinese-GSDSimp UD_Croatian-SET UD_Czech-CAC UD_Danish-DDT UD_Dutch-Alpino UD_English-EWT UD_Estonian-EWT UD_Finnish-TDT UD_French-GSD UD_German-GSD UD_Haitian_Creole-Adolphe UD_Hebrew-IAHLTwiki UD_Icelandic-GC UD_Indonesian-GSD UD_Irish-IDT UD_Japanese-GSDLUW UD_Korean-Kaist UD_Latvian-LVTB UD_Lithuanian-ALKSNIS UD_Naija-NSC UD_Norwegian-Nynorsk UD_Persian-PerDT UD_Portuguese-Porttinari UD_Romanian-RRT UD_Russian-GSD UD_Scottish_Gaelic-ARCOSG UD_Serbian-SET UD_Sindhi-Isra UD_Slovak-SNK UD_Slovenian-SSJ UD_Spanish-GSD UD_Swedish-Talbanken UD_Thai-TUD UD_Turkish-BOUN UD_Ukrainian-ParlaMint UD_Western_Armenian-ArmTDP

In addition, the following 59 treebanks have been added to the training in this model for academic purposes:

- UD_Ancient_Greek-PTNK UD_Ancient_Greek-PROIEL UD_Ancient_Greek-Perseus UD_Ancient_Hebrew-PTNK UD_Basque-BDT UD_Bulgarian-BTB UD_Classical_Armenian-CAVaL UD_Classical_Chinese-Kyoto UD_Coptic-Scriptorium UD_Coptic-Bohairic UD_Egyptian-PC UD_Erzya-JR UD_Estonian-EDT UD_Galician-CTG UD_Galician-TreeGal UD_Georgian-GLC UD_Gothic-PROIEL UD_Greek-GDT UD_Hindi-HDTB UD_Hungarian-Szeged UD_Icelandic-IcePaHC UD_Icelandic-Modern UD_Italian-ISDT UD_Italian-Old UD_Khoekhoe-KDT UD_Kyrgyz-KTMU UD_Latin-CIRCSE UD_Latin-ITTB UD_Latin-LLCT UD_Latin-Perseus UD_Latin-PROIEL UD_Latin-UDante UD_Low_Saxon-LSDC UD_Maltese-MUDT UD_Manx-Cadhan UD_Middle_French-PROFITEROLE UD_Nheengatu-CompLin UD_North_Sami-Giella UD_Occitan-TTB UD_Old_Church_Slavonic-PROIEL UD_Old_East_Slavic-RNC UD_Old_East_Slavic-Ruthenian UD_Old_East_Slavic-TOROT UD_Old_East_Slavic-Birchbark UD_Old_French-PROFITEROLE UD_Old_Occitan-CorAG UD_Ottoman_Turkish-DUDU UD_Ottoman_Turkish-BOUN UD_Polish-MPDT UD_Pomak-Philotis UD_Sanskrit-Vedic UD_Sindhi-Isra UD_Urdu-UDTB UD_Uyghur-UDT UD_Vietnamese-VTB UD_Welsh-CCG UD_Wolof-WTB UD_Yiddish-YiTB UD_Zaar-Autogramm

Method

The analysis pipeline components use following prompts:

Figure 1: An example of language identification and sentence segmentation prompt instance. The parts that change from instance to instance are shown in Italic. The SHADED REGION in the assistant-role corresponds to the range over which the loss gradient is computed during training, and to the decoded text during inference. At inference time, the span from the system-role up to the assistant-role header is provided as input, and decoding of the subsequent segment continues until <eos> is generated.

Figure 2: An example of word segmentation and language-specific part-of-speech tagging prompt instance.

Figure 3: An example of dependency parsing prompt instance.

Evaluation Result

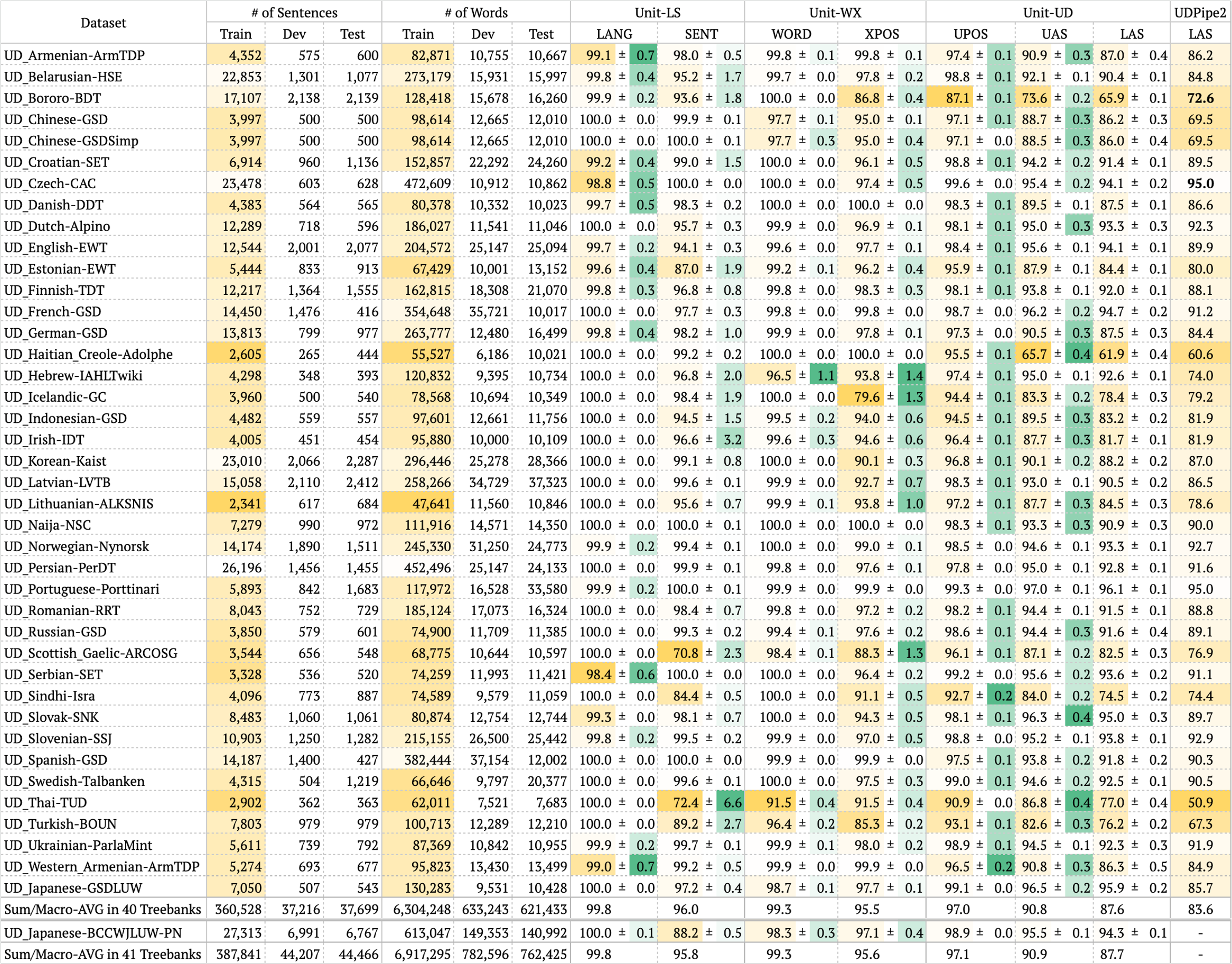

Table 1: Accuracy of the proposed method and UDPipe2 on 41 treebanks (average of 4 trials ± sample standard deviation). Yellow highlights indicate relatively small training data or relatively low accuracy. Green highlights indicate relatively large sample standard deviation.

Read the NLP2026 paper (多言語統語解析処理のためのMulti-task LoRA SFT方式の評価) and its poster material (written in Japanese) for details.

Acknowledgements

This work was conducted as part of a collaborative research project between Recruit Co., Ltd. and the National Institute for Japanese Language and Linguistics.

Citations

You are encouraged to cite one of the following papers if you use omnes-flores models:

@inproceedings{matsuda-etal-2025-step,

title = "Step-by-step Instructions and a Simple Tabular Output Format Improve the Dependency Parsing Accuracy of {LLM}s",

author = "Matsuda, Hiroshi and

Ma, Chunpeng and

Asahara, Masayuki",

editor = "Sagae, Kenji and

Oepen, Stephan",

booktitle = "Proceedings of the 18th International Conference on Parsing Technologies (IWPT, SyntaxFest 2025)",

month = aug,

year = "2025",

address = "Ljubljana, Slovenia",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2025.iwpt-1.2/",

pages = "11--19",

ISBN = "979-8-89176-294-7",

abstract = "Recent advances in large language models (LLMs) have enabled impressive performance in various tasks. However, standard prompting often struggles to produce structurally valid and accurate outputs, especially in dependency parsing. We propose a novel step-by-step instruction strategy, where universal part-of-speech tagging precedes the prediction of syntactic heads and dependency labels, and a simplified CoNLL-U like output format, our method achieves state-of-the-art accuracy on Universal Dependencies datasets across 17 languages without hallucination or contamination. We further show that multilingual fine-tuning simultaneously improves cross-language generalization performance. Our results highlight the effectiveness of explicit reasoning steps in LLM-based parsing and offer a scalable, format-consistent alternative to bracket-based approaches."

}

@misc{matsuda2025stepbystepinstructionssimpletabular,

title={Step-by-step Instructions and a Simple Tabular Output Format Improve the Dependency Parsing Accuracy of LLMs},

author={Hiroshi Matsuda and Chunpeng Ma and Masayuki Asahara},

year={2025},

eprint={2506.09983},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2506.09983},

}

Version History

0.1.0

- 2026-03-09 Release 0.1.0